Services on Demand

Article

Indicators

Related links

-

Cited by Google

Cited by Google -

Similars in Google

Similars in Google

Share

South African Journal of Childhood Education

On-line version ISSN 2223-7682

Print version ISSN 2223-7674

SAJCE vol.4 n.2 Johannesburg 2014

ARTICLES

South African teachers' use of national assessment data

Anil KanjeeI; Qetelo MoloiII

ITshwane University of Technology. Email: KanjeeA@tut.ac.za

IIDepartment of Basic Education

ABSTRACT

This paper reports on the perceptions and experiences of primary school teachers of the challenges they faced and the prospects of using data from the Annual National Assessments (ANAs). While the majority stated that information from the ANAs can assist teachers to improve learning, responses on the use of the ANAs in the classroom were mixed, with most reporting that teachers did not know how to use ANA results to improve learning, and that no plans were in place at their schools for the use of ANA data. A significant proportion also indicated that they received little or no support from the school district on how to use ANA results. These findings were consistent across the school quintiles as well as the foundation and intermediate phases. Given the potential value of the ANAs, the paper highlights two initiatives aimed at enhancing the meaningful use of ANA results to improve learning and teaching in schools.

Keywords: Annual National Assessments, teacher understanding of assessment, use of assessment information, large-scale testing

Introduction

In recent years, the key focus of the transformation agenda for the post-apartheid schooling sector in South Africa has moved towards assessment as a key driver for improving teaching as well as learning in schools (Kanjee & Sayed 2013). Not only has assessment been entrenched in the curriculum and learning programmes, but the country has also embarked on a census-based system of Annual National Assessments1 (ANAs) for all learners in primary school and some in high school (RSA DBE 2012a). The vexing problem in South African schools has been the observation that, in spite of relatively larger investments made into education compared to neighbouring countries, increased inputs do not seem to match the observed learning outcomes (Chisholm & Wildeman 2013). Both regional and international benchmarking studies continue to show that the level and quality of learning outcomes in South Africa's schools tend to be lower than those of countries that invest significantly less in their schooling sectors (Moloi & Chetty 2010). The ANAs were planned as one measure that could potentially increase awareness about the challenges of teaching and of learners' struggle. The claim was that such a testing intervention would provide relevant information to teachers for use in developing appropriate interventions for improving teaching and learning (RSA DBE 2012a).

First implemented in 20110, the ANAs represent one of the largest education initiatives undertaken in the country with the primary aim of improving learning through effective teaching. ANAs comprise the testing of all Grade 1 to 6 learners and all Grade 9 learners in languages and mathematics, totalling approximately six million learners, in all the public schools in the country. Despite three cycles of ANA that have been completed, there has been limited research and information regarding the extent to which the aims and objectives of the ANAs are being addressed in schools, or the challenges and successes that teachers encounter in its use for improving teaching. It is on this aspect that this paper focuses. Specifically, the authors were interested in understanding teacher perceptions regarding 1) their level of preparedness to effectively administer the ANAs; 2) the value of test information for their teaching and for improving learning; and 3) their experiences of how the results of the ANA are used in classroom practice. In addition, the paper also seeks to determine if any differences exist among teachers across the different school quintiles.2

The paper begins with a review of the use (and value) of national assessment surveys, followed by an overview of the ANAs and their application in South African schools. Next, the methodology and findings of the study are presented, followed by a discussion of the key challenges and prospects facing teachers in their quest to use national assessment results for improving learning and teaching. The paper concludes by presenting options for addressing the challenges, with specific focus on the dearth of knowledge regarding the value and effective use of information from national assessment studies in South African schools.

National assessments: how useful are they?

A study of related literatureindicates a conflation of the terms 'national' and 'large-scale' assessments, with a range of definitions forwarded and used to indicate their meaning and use (Kellaghan & Greaney 2004; Pellegrino, Chudowsky & Glaser 2001). Given their specific application and purpose in the South African context, the ANAs are considered as a national, census-based survey. In this paper, national assessments are defined as:

[T]he process of obtaining relevant information from an education system to monitor and evaluate the performance of learners and other significant role-players as well as the functioning of relevant structures and programs within the system for the purpose of improving learning.

(Kanjee 2007:13)

Kanjee (2007) argues that the defining characteristic of any national assessment must locate the learner as the most significant participant of a country's education system, and thus the improvement of learning, arguably by way of teaching, as the most critical outcome to attain. Similarly, Abu-Alhija (2007) notes that the general purpose of any national assessment should be to improve educational outcomes, and lists four key functions of these assessments: 1) to ensure accountability; 2) to assure quality control; 3) to provide instructional diagnosis; and 4) to identify needs and allocate resources.

Highlighting the instructional diagnosis3 function, Abu-Alhija (2007) contends that this has led to the most controversy regarding the use of these assessments. Some of the negative consequences noted are that teachers will 'teach to the test', that is, they will focus mainly on test-taking strategies and therefore spend less time on actual teaching; or that teachers are not adequately equipped to use assessment results effectively for the purpose of improving their teaching/instruction (Kellaghan, Greaney & Murray 2009; Shirley & Hargreaves 2006). However, others highlight the positive role that national assessments can play in improving learning and teaching. Gilmore (2002) argues that through careful design and implementation, national assessments can have a positive impact on teachers' assessment capacity and the assessment activities in classrooms. Similarly, Popham (2009) argues that national assessments can have a positive effect on classroom practices when these assessments are used to provide relevant and usable information to teachers to improve teaching practices in the classroom.

While national assessments, through the information that they generate, have the potential to lead to the identification of practices that may be responsible for underperformance, what is critical is how information obtained from these national assessments is utilised to impact on education reform in general, and improving learning outcomes in particular (Schiefelbein & Schiefelbein 2003). However, in a review conducted by Kellaghan et al (2009), the authors noted the underuse of the data as a shortcoming in many countries where national assessments are conducted.

Park (2012) and Kellaghan et al (2009) identified a number of constraints to teachers' use of assessment data: 1) the irrelevance of data derived from these tests is a demotivating factor that discourages teachers from trusting the validity of the data; 2) when data was made available at a time that teachers did not need it, teachers were less likely to use it; 3) data is often reported in formats that are either not familiar to teachers or are perceived to be irrelevant to what happens in the classroom; 4) teachers often find their own classroom assessment data more relevant to what they are doing; 5) teachers often lack the relevant skills to analyse the data; and 6) the absence of strong leadership and support from school district personnel in terms of promoting a culture of evidence-based decision-making inhibits the potential of teachers to use data; where district staff provided good role models of data use, teachers grew to respect and value the importance of data.

With regard to interventions that may help teachers to use data, Marsh (2012:3) proposes a "theory of action" which frames what he refers to as "leverage points". According to this author, support for teachers could focus on helping them to collect valid and relevant (raw) data as a critical leverage point in their own classrooms. The next leverage point could be how to analyse the data and transform it into useful information, graphical or text, for the intended purpose. Then there is the next leverage point of bringing one's professional and pedagogic expertise to bear on the assessment information (processed data) and using this mix to generate 'knowledge' about the learners, learning, and learning how to learn. The application of the acquired knowledge to respond to learners and assist them to learn better and solve their problems is the final leverage point. In this regard, subject advisors and other district officials should play a major role in guiding teachers. It is also important to note that teachers need support on how to use data to evaluate the impact of interventions and provide feedback to learners in ways that will inform, motivate and empower them.

Marsh's (2012) framework makes the point that data does not speak for itself. For teachers to use data efficiently, effectively and successfully in their teaching, they will need support at every step of the way until a pervasive culture of data utilisation (and usability of data) has taken root in the education system. The success of the introduction and implementation of the ANAs needs to be understood against this background of the need for a theory of action that informs how, why, and at which strategic points data of a particular type should, ideally, be used. Teachers need a theory of action that drives their use of data.

The origins and purpose of ANA in the South African education system

National assessment surveys were firstimplemented in South Africa in 1996 on representative samples of schools and learners in Grade 9, followed by Grades 3 and 6 (Kanjee 2007). ANA in its current design was implemented in 2010 as a national strategy to monitor the level and quality of basic education with a view to ensuring that every child receives basic education of a high quality, regardless of the school they attend.

The introduction of ANA was partly necessitated by repeated findings that South African learners were underperforming in relation to the financial and resource inputs that the stateinvested in education (Chisholm & Wildeman 2013). Consequently, the national Basic Education Department (DBE) prioritised the provision of basic education of high quality to all learners as a key deliverable. A presidential injunction was issued to conduct ANA and monitor performance, with the target set at 60% of learners in Grades 3, 6 and 9 achieving acceptable levels of literacy and numeracy by 2014 (The Presidency, Republic of South Africa 2010). In the education sector plan, Action Plan 2014: Towards Schooling 2025 (RSA DBE 2012a), the DBE identified laudable intervention strategies to improve the quality of basic education and also specified measurable goals that were intended to be achieved by 2014. According to the plan, ANA is expected to improve learning in four key ways, namely:

"1) Exposing teachers to best practices in assessment; 2) targeting interventions to the schools that need them most; 3) giving the schools the opportunity to pride themselves in their own improvement; and 4) giving parents better information on the education of their children."

(RSA DBE 2012a: 49)

The implementation of ANA was envisaged as a two-tier approach for testing learners, based on the administration of a 'universal ANA' and 'verification ANA', and a parallel third process for testing teachers (RSA DBE 2012a).

In terms of the 'universal ANA', the Action Plan specifies that all schools in the country must conduct the same grade-specific language and mathematics tests for Grades 1 to 6 and for Grade 9 (RSA DBE 2012a). These tests are to be marked by schools and moderated by the province. Each district is required to produce a district-wide report and inform schools how well they are performing in relation to other schools in the district, province and country. Specifically, the Action Plan requires districts to "promote improvements in all schools [and] explain how school results feed into district results, but without attaching actual performance targets to every school" (ibid:54). The Action Plan notes that the district-wide ANA report is a vital tool for managing improvements, and specifies that

[...] the district office will pay particular attention to supporting schools that have performed poorly in ANA, and to ensuring that these schools have the teachers and materials they should have.

(RSA DBE, 2012a: 54)

The purpose of verification ANA is twofold. First, it is to report on performance at the national and provincial levels using ANA scores that are highly reliable; and second, it must identify key factors that impact on learner performance (RSA DBE 2012a). The verification ANA is envisaged to be administered as an external and independent exercise in a random sample of schools selected from all provinces. The Action Plan also proposes a third, parallel component for teacher testing, based on testing a sample of teachers from schools that also participate in verification ANA. As specified in the Action Plan, the "focus of the teacher tests is on both subject knowledge and knowledge in pedagogics and teaching methodologies" (ibid:56). However, to date, no information is available on whether this component has been implemented.

Kanjee (2011) argues that the ANAs can only impact on improving teaching in schools (and with that, expecting improvement in learning) if a number of critical issues pertaining to its conceptualisation and overall design are addressed. Key issues raised by Kanjee (2011) include the lack of a clear theory of change regarding how the ANAs can impact on improving learning and teaching in schools, and the appropriateness of the universal and verification ANAs to provide relevant information for addressing the twin needs of national monitoring and classroom intervention - specifically, Kanjee notes that the current design does not provide results to policy makers that can be compared across different years, given the lack of any common instruments or items, and that the current instruments provide limited diagnostic information to teachers. Other key issues are the development of effective systems and processes for the reporting and dissemination of results; the provision of support and tools to teachers and district officials for the effective use of ANA results to improve learning; the implementation of appropriate systems and processes to monitor and evaluate the quality of the ANAs; and the capacity of the DBE and provinces to effect the requisite changes so that the primary goal of the ANA, which is to improve learning through teaching in schools, can be attained.

Guidelines for the interpretation and use of the ANAs

In addition to the national ANA report, the DBE publishes guidelines on how to interpret and use the ANA results (RSA DBE 2011b, 2012b, 2013b). The guideline documents are prefaced with the purpose of ANA, specifying that the results of ANA will enable the education sector to increase feedback and evidence about how the various strategies and interventions put in place by the Department impact on learner performance. The documents further outline the manner in which the results have been presented and should be interpreted and used. Finally, and most importantly, they outline how ANA results should be integrated into all the programmes in the schooling system.

A limitation of the guideline documents is that they address more than one target group, namely teachers, school managements, and district and provincial officials. Consequently, the documents tend to be shallow in addressing the data use needs of the diverse users and, therefore, may not do justice to all the target groups. In Marsh's (2012) and Park's (2012) studies it was reported that teachers often find the results of large-scale assessments less useful than their own classroom assessments.

Research questions

Notwithstanding current debates regarding the value of national assessments and their impact on improving teaching and learning, and accepting the premise that the ANAs can serve as a catalyst for spearheading reform in the classroom, the critical challenge of how this is carried out in practice and the extent to which the system is able to facilitate such a process at scale, so as to address the learning and teaching needs within all schools, still remains. Launching from this premise, this paper investigates key challenges and prospects facing teachers as they strive to use the ANAs results to improve learning and teaching in South African schools, focussing on the following questions:

1. What are teachers' understandings and views of the ANAs?

2. What are some of the limiting constraints to and prospects for teachers' effective use of the ANAs to improve learning and teaching?

3. What differences, if any, exist between teachers across the different school quintiles?

Given its focus, this study does not make claims that are representative of all teachers and schools in South Africa. Rather, the primary objective is to contribute to current debates on the value and use of the ANAs in South African schools.

Methodology

This section provides an overview of the participants involved in the study, the instruments used to collect data, and the analysis conducted to report findings.

Participants

Participants for this study were selected from a convenient sample of teachers participating in a professional development programme aimed at improving teacher classroom practices. Given that the ANAs only focus on language and mathematics in Grades 1 to 6, data was limited to foundation phase (FP) and intermediate phase (IP) language and mathematics teachers. In total, the sample comprised 114 teachers - 44 FP and 70 IP teachers. Of the IP teachers, 41 reported that they were currently teaching English, 8 Afrikaans, and 42 mathematics, with a number of teachers reporting that they also taught a combination of these subjects. Only two FP teachers were male, while 24 IP teachers were male.

With regard to the school type, 32 teachers reported teaching in Quintile 1 schools, 27 in Quintile 2, 34 in Quintile 3, 15 in Quintile 4, and 5 in Quintile 5, with data missing for one teacher. In addition, interviews were also conducted with a small number of teachers and one school principal.

Data collection

Data was collected by workshop facilitators during the first meeting of the professional development programme, which took place in August and September 2012, prior to the administration of the 2012 ANAs. A questionnaire was developed based on information obtained from discussions with a small group of teachers regarding their experiences and views of the ANAs. It comprised of 62 items, presented in three sections. Section 1 comprised nine selected response questions that sought background information such as gender, age, teaching experience, and grades and subjects taught. Section 2 comprised 36 selected and free-response questions that sought information on participation in professional development programmes, access to and use of assessment policy, perceptions on testing in general and ANAs in particular, and experiences and challenges in the use of information from the ANAs. Section 3 comprised 17 free-response questions that sought information on classroom assessment practices, focussing on formative assessment. For this paper, only data from Sections 1 and 2 was used. The Cronbach's alpha for Section 2 was 0.72, which indicates acceptable internal reliability (Cohen, Manion & Morrison 2011).

Analysis

The analysis was conducted using the SPSS software package and comprised descriptive statistics for the fixed-response questions, presented as tables or graphs, while common themes relevant to the research questions were identified for the free-response and interview questions. In addition, missing values were addressed through imputation procedures. To provide a basis for comparisons, the data was analysed separately for FP and IP teachers, and, where appropriate, by school quintile category as well. However, given the low number of teachers from Quintile 5 schools, results from this category were excluded from the analysis.

Results and discussion

To contextualise the findings regarding the key challenges and prospects facing teachers in the implementation and use of the ANAs, information was obtained on teacher views about the value of testing in general, and the ANAs in particular (See Table 1). The majority of FP and IP teachers displayed a positive view of testing, and also agreed that the ANAs can assist teachers to improve learning. However, 13% of FP teachers agreed or strongly agreed with the statement that the ANA tests were a waste of time and money, while the corresponding figure for the IP was double this percentage. Graven and Venkatakrishnan report similar responses from a group of mathematics teachers, who noted that

ANAs are good for standardizing content coverage, making explicit one's expectations about what will be assessed, providing information on learners' levels of understanding, and providing guidance on content coverage.

(Graven & Venkatakrishnan 2013:13)

On the negative aspects of ANA, the authors report that teachers highlighted the issue of language within the questions, the timing of the ANA in September, and the bureaucratic arrangements.

More concerning are the findings that a third of the FP teachers and approximately half of the IP teachers indicated that staff at their school were not prepared to administer the ANAs, while approximately 60% of teachers from both groups agreed or strongly agreed with the statement that teachers do not know how to use the ANA results. These findings are not surprising; nor are they unique to South African teachers. The limited assessment knowledge and skills of teachers have been reported by a number of studies over the years (RSA DBE 2009; RSA DoE 2000; Kanjee & Croft 2012; Kanjee & Methembu 2014; Pryor & Lubisi 2002; Vandeyar & Killen 2007). In their study, Kanjee and Mthembu (2014) reported equally low levels of assessment literacy among South African teachers across the different school quintile categories. Limited teacher assessment knowledge and skills were also highlighted in Chile, where ANA-type census-based national assessments, known as SIMCE (Sistema de Medicion de la Calidad de la Educacion), are also conducted. Specifically, Ramirez notes the following:

At the school level, SIMCE information seems to be underused. While educators value the SIMCE information, they have difficulties understanding and using it for pedagogical purposes.

(Ramirez 2012:18)

A comparison of teacher responses across school quintiles reveals largely similar responses (see Table 1), with a generally positive view regarding the value of testing in general, and the ANA tests in particular. Across all school quintiles, between 15% (Quintile 2) and 33% (Quintile 4) of teachers felt that the ANA tests were a waste of time and money. A relatively low percentage of teachers (an average of 56%) across all quintile categories either agreed or strongly agreed that their staff were prepared to administer the ANAs, while an unusually high percentage (an average of 62%) also felt that teachers did not know how to use the ANA results, with the highest percentage noted for Quintile 2 schools (85%). In addition, just over a third of teachers across the different quintile categories agreed or strongly agreed that the district provided guidance and support regarding the use of the ANA results.

Receipt of relevant documentation

In order to ensure the effective implementation of the ANAs, the DBE has to ensure that all relevant documentation - that is, the ANA tests, ANA exemplars, administration guidelines, data entry tools and guidelines - are provided to schools in advance. Across both the FP and IP teacher groups, the overwhelming majority reported that they had received the ANA exemplars at the time of the study, while approximately 80% from both groups reported that they had received the tests and the data entry guidelines (Figure 1). The high positive response rate regarding the exemplars is understandable, as these are usually provided to schools relatively early on so that teachers are able to prepare their learners to take the ANAs. In addition, the exemplars are also made available on the Internet for easy access.

A review of teacher responses by school quintile category (Table 2) reveals minor differences between Quintile 1 and 4 schools, with a high overall percentage (more than 75%) of teachers reporting that they had received the ANA tests, exemplars, and entry guides, and approximately two-thirds of teachers reporting that they had received the data entry tools.

Trainingin administration and use

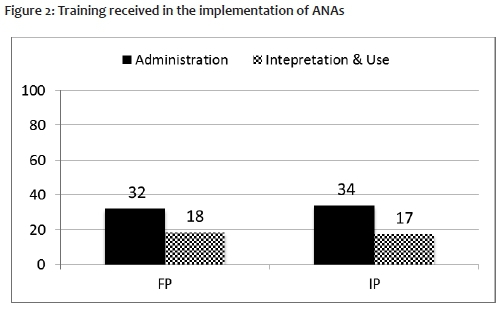

Given the crucial role of districts in supporting schools, and in particular with regard to the implementation and use of the ANAs, teachers were also asked about the guidance and training received from the districts. Approximately 65% of the FP and IP teachers disagreed/strongly disagreed with the statement: 'The District/Regional office provides guidance/training on the use of the ANA results' (see Table 1). In addition, as shown in Figure 2, approximately a third responded in the affirmative with regard to administration training, while less than a fifth indicated that they had received training in the interpretation and use of ANA results. For both sets of training sessions, the majority of FP and IP teachers (approximately 90%) who had received training reported that the district or province had provided the training, with approximately two-thirds reporting that they had found the training sessions very useful. Given the low number of teachers attending training, it appears that a key challenge is the capacity of the district or province to provide teachers with the training they require. In this regard, Marsh (2012) notes that although districts occupy a strategic position to support schools in developing a culture of data-driven interventions, one of the limiting factors towards district support for schools is the lack of capacity at that level.

A relatively low percentage of teachers across the quintile categories reported having receiving training in the administration of the ANAs (between 35% and 48%) or the interpretation and use of the results (between 19% and 28%). Similar to previous comparisons, the findings indicate minimum differences in the responses of teachers from schools across the different quintile categories, as may be seen in Figure 3.

Teachers' plans for using ANAs

The use of assessment information to identify and address learner problems is regarded as the most critical contribution of national assessments (Marsh 2012; Popham 2011; Schiefelbein & Schiefelbein 2003). Detailed responses were provided by approximately 50% of the teachers from both groups, that is, FP (21) and IP (38), about how they planned to use the ANA results. These responses were reviewed based on the extent to which they reflected the key purposes of ANA, as specified in the Action Plan (RSA DBE 2012a) and the Curriculum News (RSA DBE 2011c). These purposes include exposing teachers to best practices in assessment; targeting interventions to schools; providing an opportunity for schools to take pride in their achievement; providing information to parents; and improving teaching and learning.

The majority of the FP teacher responses focused on the 'improving teaching and learning' aspect (8); followed by 'targeting interventions' (2); and 'exposing teachers to best practices' (1). Similarly, the majority of responses from the IP teachers emphasised the 'improving learning and teaching' aspect (20), with two each placing the emphasis on 'targeting interventions', 'best practices' and 'informing parents'. The following comments highlight some issues raised by the teachers regarding the 'improving teaching and learning' aspect:

"The ANA results to me serve as a measuring stick in my classroom. It will assist me to identify my teaching methods and techniques whether it suites my learners understanding."

(FP, Q2)

"[...] to see their weaknesses and strengths, firstly to try and look thoroughly at their weaknesses and teach those thoroughly, not overlooking their strengths but also catering for both."

(FP, Q3)

In the IP group, one teacher noted the following:

"The results show which topics learners perform bad on, then I will emphasise those topics and [in] the ones in which they did well I will teach only for short time."

(Maths teacher, Q1)

A second teacher responded as follows:

"I will check their scripts and identify the areas where they were not successfully answered and it will guide me [in] my teaching and also [to] use foundations for learning, assessment guidelines, ANA examples and ANA scripts in my plan."

(Maths, Q3)

The teachers reported the following with regard to 'best practice':

"We use exemplar question papers as class work or homework so that the learner will get used to using paper rather than test what is written on board."

(FP, Q2)

"To check the way they formulate questions and help my learners to use and adapt to that. To improve the standard of question papers at the institution."

(Maths, Q3)

Graven and Venkatakrishnan (2013) report similar findings in their study on mathematics teachers' use of ANAs. The authors note that the teachers used the exemplars to revise content and familiarise learners with the test format, as well as for guidance on how to cover the curriculum. Similarly, in a project on the use of Assessment Resource Banks (ARBs) in poorly resourced and rural schools, Kanjee (2009) found that teachers not only used the ARB test items as exemplars to develop their own test items and classroom tests, but also as lesson planning guidelines and a pool of test items for use in lesson delivery and the assignment of homework and class work.

A focus on targeting interventions was reflected in the following teacher inputs:

"It will help the national [department to] implement policies and [...] to prepare the educators to teach and what to teach. It will help [... ] to assess their curriculum and the areas that need development."

(FP Q3)

At the IP level, one teacher noted:

"By giving similar tests to help learners to cope better with these tests. Strengthen intervention programmes for reading, etc."

(English, Q2).

These responses reflect a deeper understanding regarding the use of information from ANA which extend beyond the classroom. What is not clear is how teachers arrived at this understanding, and how this impacts on their views and their use of the ANA results.

All other responses were unrelated to any of the specified purposes, instead highlighting only logistical issues like the delivery of material and workbooks. However, two teachers reported using the ANA results in lieu of term marks, one of which noted the following:

"I plan to use ANA results for the term. As additional marks, instead of writing 2 tasks, we must write only one, the second one must be ANA results, to save time for writing and marking and recording learners' marks to submit [the] progression schedule in time as usual."

(FP, Q3)

Similarly, one IP teacher also reported that she used the ANA results for the third term marks. This is despite the specification in the National Curriculum Statement (NCS) that the ANA results should not be used as formal term marks or for progression purposes (RSA DBE 2011d).

The above responses provide a indication that the understanding of the majority of teachers across both the FP and IP groups is that the primary focus of the ANA is on improving teaching and learning, even though this specific purpose is not listed in the Action Plan 2014 (RSA DBE 2012a). However, it is also clear that the teachers have limited insight into how the ANA results should be used for improving teaching and learning. This is not surprising, given the limited information and training the teachers had received in this regard. In their research on data use by teachers, Datnow, Park and Kennedy-Lewis (2012) argue that the underlying theory of action of this approach is that teachers need to know how to analyse, interpret, and use data so that they can make informed decisions about how to improve learner performance in national assessments. However, teachers need to be adequately qualified, and require the relevant knowledge and expertise to adequately use assessment information to enhance learning and teaching practices in their classroom. In practice, this is not always the case (Brookhart 2011; Kanjee and Mthembu 2014; Popham 2011).

School plans for using ANAs

Regarding the use of ANAs results, the Curriculum News (RSA DBE 2011c) requires all teachers and all schools to have a clear plan of action, specifying that the DBE expects all schools to finalise the analysis of their learners' performance by the end of February and share the results with parents, and that schools that did not perform as well as expected should already have heard or should expect to hear from their district offices regarding a discussion of their performance and their improvement plans. Schools are also expected to set their own targets for improving their ANA scores, and are reminded about the required target of 60% and above for the majority of learners in Grades 1 to 9.

As noted in Table 3, only 14% of FP and 16% of IP teachers reported that their schools had a plan for how to use the ANA results, while 44% and 34% respectively reported that their school did not have a plan. Also significant is the fact that 41% of FP and 50% of IP teachers did not respond to this question. These findings are especially concerning given that the primary intention of ANA is to provide information to schools for use in identifying and addressing the learning gaps of their learners.

Of the teachers who reported that their school had a plan to use the ANA results, the responses from both FP and IP teachers were relatively vague, with limited details provided about how these plans would be implemented to enhance learning. Of the six responses from FP teachers, one teacher reported:

"The school analysed the results and took a decision to do tasks for this term based on the questions that were badly answered."

(FP, Q3)

A second teacher noted:

"This can be used and can assist the next grade educators, the diagnostic assessment where educators will hand over their learners and also giving them ANA results and scripts for them to see learners' level of understanding."

(FP, Q2)

Of the nine responses from IP teachers, three focused on providing information for parents, and six on the use of ANAs for implementing interventions to improve learning. For example, one teacher reported:

"We sit as phases to see where the learners went wrong and how we will correct that moving forward. The results are also used to determine the learners' understanding of the different methods of questioning. ANA results will be used for improvement purposes."

(Maths, Q4)

Commenting on similar challenges regarding the limited use of assessment results by schools in Chile, Ramirez (2012:11) notes that "providing feedback to the schools and teachers with useful assessment information does not seem to be enough to improve higher student learning", and argues that the right institutional arrangements and incentives must be in place for the assessment information to have an effect. In this context, the theory of action proposed by Marsh (2012) provides a practical option for developing the right institutional framework noted by Ramirez (2012).

A review of the teacher responses about the existence of school plans (Figure 5) reveals that the overwhelming majority of the teachers across Q1 to Q4 schools either reported that their school did not have a plan for the use of the ANA results or did not respond to the question ('Missing"). In Q4 schools, however, approximately a third of the teachers responded in the affirmative.

Interview responses

Interviews were conducted with six teachers and one school principal. Similar to the limitations noted above, these interviews were not intended to obtain a representative view of all teachers, but to better understand some of the responses to the surveys. Not surprisingly, the responses from teachers generally reflected similar responses to those in the surveys. However, two additional issues were raised. The first reflects both the innovativeness of teachers regarding the analysis of ANA results and the potential value of the ANAs, and the second reflects the wide-ranging reactions to and/or views of the ANA that exist among teachers.

With regard to the innovative analysis of the ANA results, one teacher noted:

"[...] to do a mark analysis and to understand what your marks are telling you, you don't need any fancy programs [...] you can do this with different colour markers."

(Maths, Q5)

This teacher had developed an innovative system whereby all items in which learners obtained less that 50% were colour-coded according to the sub-domain assessed by thatitem, for example fraction or multiplication (see Figure 5). In consultations with all the mathematics teachers at the school, common trends were identified across the grades; reasons and possible explanations for these trends were discussed; and specific interventions to address them were developed. For example, using the 2011 ANA results, two clear trends were detected in their school. First, that Grade 2 learners had problems with repeated subtractions, Grade 3 with halving, Grade 4 with division, Grade 5 with ratio and division, and Grade 6 with ratio. Second, Grade 3 learners also experienced problems with ordering fractions, Grades 4 and 5 with equivalent and adding fractions, and Grade 5 with equivalent fractions. Systems such as this one should be further explored and developed, and shared with all teachers and schools. This activity is exactly what district and provincial officials should to take up and replicate, where possible.

However, there are also challenges to the use of ANAs results. Two sets of responses in particular highlight these. Firstly, two teachers reported that the ANAs were just another externally imposed burden that they have to contend with:

"Once we're done with the ANAs, the marking, the entering and everything, we send data to the districts, and then schooling goes on as usual."

(Maths, Q3)

Similar findings were noted in the National Education Evaluation & Development Unit's (NEEDU) National Report 2012: The State of Literacy Teaching and Learning in the Foundation Phase (NEEDU 2013).

Secondly, the interview with the school principal, conducted during the 2014 administration of the ANAs, highlighted two critical issues. The first comment noted:

"Our teachers are not teaching to the test, they're teaching to cover the curriculum. But they can't finish the curriculum, because this ANA is taking up the time. So we are doing more than what is required by ANA, but ANA is now limiting what we do."

(School Principal, Q5)

While the extent and prevalence of similar sentiments and practices within the system are not known, these comments highlight the perverse impact of accountability regimes. Noting similar concerns, Gilmour, Christie and Soudien (2012:57) warn that basic skills may come to dominate curricula as a result of "the perverse effects of testing in narrowing curricula as teachers strive to achieve good results through 'teaching to the test'.

In his second comment, the principal highlighted the issue of 'cheating':

"Our teachers are paying more attention to the school concert than to the ANAs. Why? Because we can't understand how our neighbouring schools, who have the same learner profiles and numbers, and the same challenges and problems as us, [...]keep getting high ANA scores, in the 60s and 70s. Now how is this possible? Our teachers are clear that we will not cheat, but they also know, no matter what they do, anything that is done honestly, we will still remain an underperforming school. So what's the use?"

(School Principal, Q5)

Volante and Cherubini (2010) report similar suspicions of teaching to the test shared by teachersin Ontario. Notwithstanding some of the assumptions made regarding the value of the tests in providing the relevant diagnostic information; the capacity of teachers and education officials to effectively use this information for developing interventions to improve learning and teaching; or the negative impact of accountability regimes on classroom practice, theissue of teaching to the test merits further consideration within the context of South African schools (Kanjee 2011). Specifically, in the context of limited to no teaching and low levels of learning in many South African schools (RSA DBE 2011a; 2013c), teaching to high quality tests that are largely representative of the curriculum provides schools, school leadership, teachers and learners with significantly more structure, focus and direction (Popham & Ryan 2012).

Conclusion

The Annual National Assessment represents one of the largest initiatives undertaken to improve learning and teaching in recent years. While the debate regarding its conceptualisation and value for addressing the key challenge of improving quality continues (Chisholm & Wildeman 2013; Gilmour et al 2012; Graven & Venkatakrishnan 2013; Kanjee 2011), the findings from this study reveal that most teachers were positive about the value of testing and the contribution that ANAs could make to improving learning. At the same time, the majority of teachers across both the FP and IP groups and the different quintile categories were not adequately prepared to effectively use the ANA results to address the learning gaps of their learners; nor did most of the participating schools have any effective plans in this regard. While teachers who received training in the implementation and use of the ANAs found this very useful, a serious concern noted is that the majority of teachers, across all quintile categories and both phases, reported receiving minimum support and guidance from the district, despite the clear mandate of the district, as specified in the Action Plan 2014, in ensuring the effective implementation and use of the ANA results.

In implementing the ANAs, large sums of money have been spent to obtain 'valid and reliable' information for use in improving learners' performance levels, but limited information and support are provided to teachers and schools about how this should be attained. The primary consequence that emerges is the relegation of the use of assessment information from improving learning to the promotion of a 'testing' and 'measurement' culture. Within this context, the single most critical challenge to address pertains to supporting teachers and schools in enhancing their use of assessment results to improve learning in all classrooms.

In addressing this challenge, we highlight two current initiatives to enhance teacher capacity and skills for using assessment to improve learning and teaching. The first initiative comprises the development of performance descriptors and clear-cut scores to categorise learners into specific performance levels, so that teachers have detailed information about what learners know and can do. Teachers are provided with an Excel programme into which the ANA scores of their learners can be entered, which then generates detailed reports that indicate the specific performance category each learner falls into and also provides specific ideas for next steps regarding interventions to address the learning gaps of each learner (Kanjee & Moloi 2012).

The second initiative is an integrated teacher development programme for pre-and in-service teachers. The in-service programme comprises nine modules offered over a period of one year and focuses on the use of classroom assessment information for formative and summative purposes, with specific emphasis on the ANAs. The pre-service programme comprises eight modules offered over a three-year cycle to teacher trainees from their second year onwards (Kanjee 2013). Both of these initiatives are in the process of being piloted, with results only expected in 2015/2016 respectively.

References

Abu-Alhija FN. 2007. Large-scale testing: Benefits and pitfalls. Studies in educational evaluation, 33(1):50-68. [ Links ]

Bansilal S. 2012. What can we learn from the KZN ANA results? SA-eDUC JOURNAL, 9(2). [Retrieved 5 August 2014] http://www.nwu.ac.za/sites/www.nwu.ac.za/files/files/p-saeduc/New_Folder_1/2_What%20can%20we%20learn%20from%20the%20KZN%20ANA%20results.pdf [ Links ]

Brookhart SM. 2011. Educational Assessment Knowledge and Skills for Teachers. Educational Measurement: Issues and Practice, 30(1):3-12. [ Links ]

Chisholm L & Wildeman R. 2013. The politics of testing in South Africa. Journal of Curriculum Studies, 45(1):89-100. [ Links ]

Cohen L, Manion L & Morrison K. 2011. Research methods in education. 7th Edition. London: Routledge Falmer. [ Links ]

Datnow A, Park V & Kennedy-Lewis B. 2012. High school teachers' use of data to inform instruction. Journal of Education for Students Placed at Risk (JESPAR), 17(4):247-265. [ Links ]

Gilmore A. 2002. Large-scale assessment and teachers' assessment capacity: Learning opportunities for teachers in the National Education Monitoring Project in New Zealand. Assessment in Education, 9(3):343-361. [ Links ]

Gilmour D, Christie P & Soudien C. 2012. The poverty of education. [Retrieved 5 August2014] http://www.carnegie3.org.za/papers/91_Gilmour_The%20poverty%20of%20Education%20in%20SA.pdf.

Graven M & Venkatakrishnan H. 2013. ANAs: Possibilities and constraints for mathematical learning. Learning and Teaching Mathematics, 14:12-16. [ Links ]

Graven M, Venkat H, Westaway L & Tshesane H. 2013. Place value without number sense: Exploring the need for mental mathematical skills assessment within the Annual National Assessments. South African Journal of Childhood Education, 3(2):131-143. [ Links ]

Kanjee A. 2007. Large-scale assessments in South Africa: Supporting formative assessments in schools. Paper presented at the 33rd International Association for Educational Assessment Conference, Baku, Azerbaijan, 16 to 21 September.

Kanjee A. 2009. Enhancing teacher assessment practices in South African schools: Evaluation of the assessment resource banks. Education as Change, 13(1):67-83. [ Links ]

Kanjee A. 2011. Assessment, accountability and policy reform in South Africa. Paper presented at the national conference of the Association for Evaluation and Assessment in Southern Africa (ASEASA), Vanderbijlpark, 11 to 13 July.

Kanjee A. 2013. Enhancing teachers' use of assessment for improving learning: A district-wide professional development programme. Proposal submitted to the National Research Foundation. Department of Educational Studies, Tshwane University of Technology, Pretoria.

Kanjee A & Croft C. 2012. Enhancing the use of assessment for learning: Addressing challenges facing South African teachers. Paper presented at the 2012 annual meeting of the American Educational Research Association, Vancouver, Canada, 13 to 17 April.

Kanjee A & Moloi Q. 2012. Performance standards and the use of assessment results in South African schools. Paper presented at the UMALUSI Conference, Johannesburg, 10 to 12 May.

Kanjee A & Mthembu ZJ. 2014. Assessment literacy of foundation phase teachers: An exploratory study. Paper submitted to the South African Journal of Childhood Education.

Kanjee A & Sayed Y. 2013. Assessment policy in post-apartheid South Africa: Challenges for improving education quality and learning. Assessment in Education: Principles, Policy & Practice, 20(4):442-469. [ Links ]

Kellaghan T & Greaney V. 2004. Assessing student learning in Africa. Washington, DC: World Bank Publications. [ Links ]

Kellaghan T, Greaney V & Murray S. 2009. Using the results of a national assessment of educational achievement. Volume 5. Washington, DC: World Bank Publications. [ Links ]

Mandinach EB. 2012. A perfect time for data use: Using data-driven decision making to inform practice. Educational Psychologist, 47(2):71-85. [ Links ]

Marsh JA. 2012. Interventions promoting educators' use of data: Research insights and gaps. Teachers College Record, 114(11):1-48. [ Links ]

Moloi MQ & Chetty M. 2010. The SACMEQ III Project in South Africa. A study of the conditions of schooling and the quality of education. SACMEQ (Southern and Eastern Africa Consortium for Monitoring Educational Quality) Educational Policy Research Series. Pretoria: Department of Basic Education. [ Links ]

NEEDU (National Education Evaluation & Development Unit). 2013. National Report 2012: The State of Literacy Teaching and Learning in the Foundation Phase. [Retrieved 5 August 2014] http://www.education.gov.za/LinkClick.aspx?fileticket=rnEmFMiZKU8%3d&tabid=86o&mid=2407.

Park CS. 2012. Making use of district and school data. Practical Assessment, Research & Evaluation, 17(10):1-15. [ Links ]

Pellegrino JW, Chudowsky N & Glaser R. 2001. Knowing What Students Know: The Science and Design of Educational Assessment. Washington, DC: National Academy Press. [ Links ]

Popham WJ. 2009. Assessment literacy for teachers: Faddish or fundamental? Theory into Practice, 48(1):4-11. [ Links ]

Popham WJ. 2011. Assessment literacy overlooked: A teacher educator's confession. The Teacher Educator, 46(4):265-273. [ Links ]

Popham WJ & Ryan JM. 2012. Determining a high-stakes test's instructional sensitivity. Paper presented at the Annual Meeting of the National Council on Measurement in Education, Vancouver, Canada, 12 to16 April.

Pryor J &Lubisi C. 2002. Reconceptualising educational assessment in South Africa - testing times for teachers. International Journal of Educational Development, 22(6):673-686. [ Links ]

Ramirez MJ. 2012. Disseminating and using student assessment information in Chile. Washington, DC: World Bank Publications. [ Links ]

RSA DBE (Republic of South Africa. Department of Basic Education). 2009. Report of the Ministerial Task Team for the Review of the Implementation of the National Curriculum Statement. Pretoria: DBE. [ Links ]

RSA DBE. 2011a. Report on the Annual National Assessments of 2011. Pretoria: DBE. [ Links ]

RSA DBE. 2011b. Annual National Assessments 2011: A guideline for the interpretation and use of ANA results. Pretoria: DBE. [ Links ]

RSA DBE. 2011c. Curriculum News: Improving the quality of learning and teaching and strengthening curriculum implementation from 2010 and beyond. Pretoria: DBE. [ Links ]

RSA DBE. 2011d. National Curriculum Statement (NCS). Curriculum and Policy Statement: Mathematics Intermediate Phase Grade 4-6. Pretoria: DBE. [ Links ]

RSA DBE. 2012a. Action plan to 2014: Towards the realisation of schooling 2025 - Full version. Pretoria: Department of Basic Education. [ Links ]

RSA DBE. 2012b. Annual National Assessments 2012: A guideline for the interpretation and use of ANA results. Pretoria: DBE. [ Links ]

RSA DBE. 2012c. National Protocol for Assessment: Grade R-12. Pretoria: DBE. [ Links ]

RSA DBE. 2013a. Report on the Annual National Assessment of 2013: Grade 1 to 6 and 9. Pretoria: DBE. [ Links ]

RSA DBE. 2013b. Annual National Assessments: 2013 Diagnostic report and 2014 framework for improvement. Pretoria: DBE. [ Links ]

RSA DBE. 2013c. The State Of Our Education System. National Report 2012: The State of Literacy Teaching and Learning in the Foundation Phase. National Education Evaluation and Development Unit. Pretoria: DBE. [ Links ]

RSA DoE (Republic of South Africa. Department of Education). 2000. A South African Curriculum for the Twenty-First Century: Report of the Review Committee on Curriculum 2005. Pretoria: DoE. [ Links ]

Schiefelbein E & Schiefelbein P. 2003. From screening to improving quality: The case of Latin America. Assessment in Education, 10(2):141-154. [ Links ]

Shirley D & Hargreaves A. 2006. Data-driven to distraction. Education Week, 26(6):32-33. [ Links ]

The Presidency, Republic of South Africa. 2010. State of the Nation Address by His Excellency Jacob G Zuma, President of the Republic of South Africa, on the occasion of the Joint Sitting of Parliament, Cape Town. ? [Retrieved 5 August 2014] http:// www.thepresidency.gov.za/pebble.asp?relid=211

Vandeyar S & Killen R. 2007. Educators' conceptions and practice of classroom assessment in post-apartheid South Africa. South African Journal of Education, 27(1):101-115. [ Links ]

Volante L & Cherubini L. 2010. Understanding the connections between large-scale assessment and school improvement planning. Canadian Journal of Educational Administration and Policy, 115:25-114. [ Links ]

1. The Annual National Assessments programme involves administering specially developed mathematics and language pencil-and-paper tests to all learners in Grades 1 to 6 and Grade 9 in order to monitor the levels and quality of learning in these foundational skills.

2. South African public ordinary schools are categorised into quintiles. Quintile 1 represents the 'poorest' schools, while Quintile 5 is the 'least poor'. The quintile category is used largely for purposes of allocation of financial resources, and is determined according to the socioeconomic status of the community around the school and the resources and facilities available at the school.

3. The term 'instructional diagnosis' is in itself contestable in the context of assessment of learning outcomes.